Why Not Explain? Effects of Explanations on Human Perceptions of Autonomous Driving

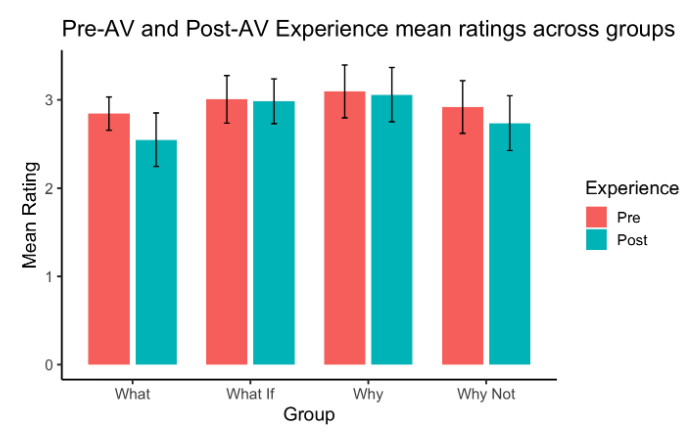

Trust declines

Trust declines

Abstract

Autonomous vehicles (AVs) have the potential to change the way we commute, travel, and transport our goods. The deployment of AVs in society, however, requires that people understand, accept, and trust them. Intelligible explanations can help different AV stakeholders to assess AVs' behaviours, and in turn, increase their confidence and foster trust. In a user study (N = 101), we examined different explanation types (based on investigatory queries) provided by an AV and their effect on people using the trust determinant factors. Our quantitative and qualitative analysis shows that explanations with causal attributions improved task performance and understanding when assessing driving events but did not directly improve perceived trust. This underlines the potential need for additional measures and research to enhance trust in AVs.